The convergence of advanced computing and optical engineering is revolutionizing smartphone photography, transforming ordinary phone cameras into sophisticated imaging systems. Computational cameras represent a fundamental shift in how we capture images, replacing traditional single-shot photography with intelligent, multi-frame processing that analyzes and combines dozens of exposures in milliseconds. By leveraging machine learning algorithms and powerful mobile processors, these systems can now capture details in both bright highlights and deep shadows, eliminate noise in low-light conditions, and even predict and enhance facial features – all before you see the final image on your screen. This marriage of optics and algorithms has democratized professional-quality photography, allowing anyone with a modern smartphone to capture images that would have required thousands of dollars of specialized equipment just a decade ago.

What Makes a Camera ‘Computational’?

Hardware Meets Software

In modern computational cameras, the marriage of hardware and software creates a powerful synergy that transforms how we capture images. Physical components like sensors, lenses, and processors work seamlessly with advanced algorithms and features to deliver exceptional results. The sensor captures raw light data, while specialized image processors immediately begin analyzing and enhancing this information.

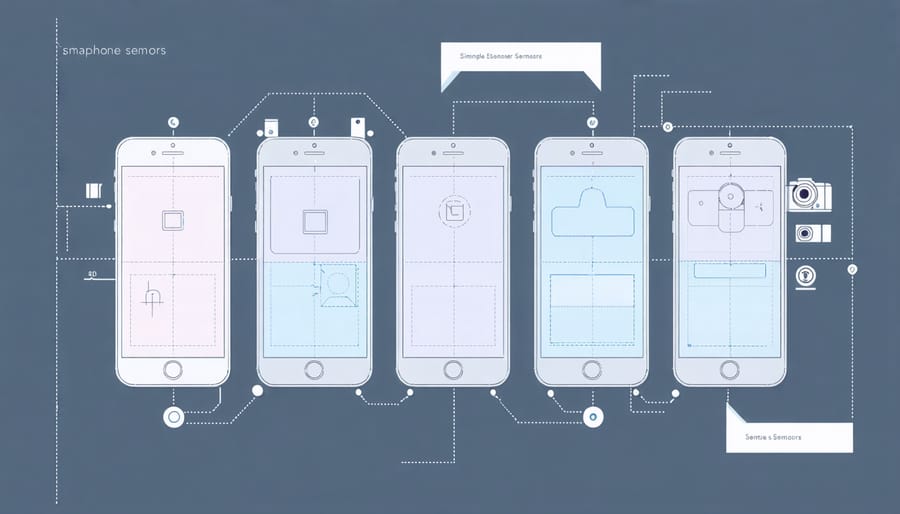

Multiple camera modules now work in concert, each serving a specific purpose – from ultra-wide landscapes to detailed macro shots. These hardware elements feed data to sophisticated AI systems that can recognize scenes, detect faces, and even understand depth information in real-time. The neural processing units (NPUs) in modern devices can make billions of calculations per second, enabling features like night mode, portrait effects, and HDR processing.

What’s fascinating is how this hardware-software integration happens instantaneously. When you press the shutter button, multiple exposures are captured and processed simultaneously, with AI making split-second decisions about how to combine and enhance these images for the best possible result.

Multiple Sensors, One Perfect Shot

Modern smartphones are like having multiple photographers working in perfect harmony, each specializing in different aspects of your shot. When you press the shutter button, your phone doesn’t just capture a single image – it simultaneously collects data from various sensors to create the perfect photo.

Typical flagship smartphones today combine information from multiple cameras, each with different focal lengths and purposes. The main sensor captures detail and color, while dedicated telephoto and ultra-wide sensors expand creative possibilities. But it doesn’t stop there. Depth sensors measure the distance to subjects, enabling those beautifully blurred backgrounds in portrait mode, while specialized light sensors ensure proper exposure in challenging conditions.

What makes this system truly remarkable is how these sensors work together. In a fraction of a second, your phone’s image processor analyzes and combines data from all these sources. It might take the sharpness from one sensor, the color accuracy from another, and the depth information from a third to create a single, optimized image. This multi-sensor approach helps overcome the physical limitations of small smartphone cameras, delivering results that were once possible only with professional equipment.

Game-Changing Features Today’s Phones Offer

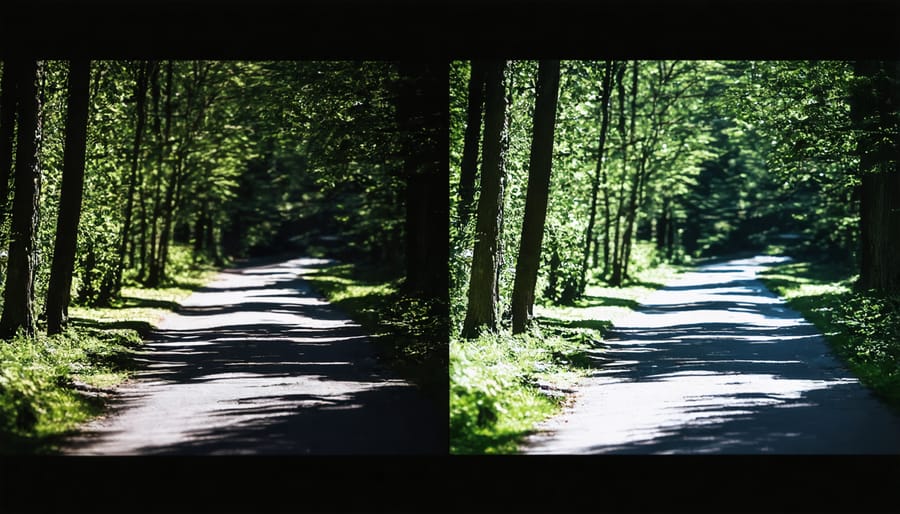

HDR and Night Mode

Modern smartphones use sophisticated computational methods to capture stunning low-light photos and handle challenging lighting conditions. HDR (High Dynamic Range) processing combines multiple exposures taken in quick succession, each optimized for different brightness levels. When you press the shutter, your phone captures several images – some exposing for bright areas like the sky, others for darker regions like shadows.

The computational engine then analyzes these frames, selecting the best details from each exposure and merging them into a single photo that preserves both highlight and shadow detail. This process happens so quickly that you barely notice it’s taking multiple shots.

Night mode takes this concept even further, using artificial intelligence and advanced algorithms to brighten dark scenes while maintaining natural colors and reducing noise. The phone might capture up to 10 seconds worth of exposures, automatically adjusting for hand movement using gyroscopic data. It then aligns and combines these frames, enhancing detail in dark areas while preserving the ambiance of the scene.

What’s particularly impressive is how these features work adaptively. The software analyzes the scene in real-time, determining whether to activate HDR, night mode, or both, and adjusting parameters like exposure time and frame count based on available light and subject movement. This intelligent automation helps anyone achieve professional-looking results in challenging lighting conditions.

Portrait Mode and Depth Mapping

Portrait mode has revolutionized smartphone photography by bringing professional-looking depth effects to everyday shots. Using a combination of hardware (multiple camera lenses) and sophisticated AI algorithms, modern phones can create artificial bokeh – that coveted background blur that traditionally required expensive DSLR cameras and fast lenses.

The magic happens through depth mapping, where the phone creates a detailed 3D map of the scene. It identifies the subject’s edges and determines the distance between different elements in the frame. This process relies on several techniques, including stereo vision (comparing images from multiple cameras), Time-of-Flight sensors, and machine learning models trained on millions of photos.

What’s particularly impressive is how these systems handle complex scenarios like hair strands, glasses, or multiple subjects. While early portrait modes struggled with these challenges, today’s algorithms are remarkably sophisticated. They can even adjust the level of blur after the photo is taken, something that’s impossible with traditional optical bokeh.

However, computational portrait mode isn’t perfect. You might notice artifacts around complex edges or slight inconsistencies in blur transitions. These quirks often appear in challenging lighting conditions or with subjects wearing intricate patterns. Despite these limitations, the technology continues to improve, with each new smartphone generation bringing more natural-looking results and better edge detection.

For best results, ensure good lighting and maintain some distance between your subject and the background. This helps the depth mapping system create more accurate separation and more pleasing bokeh effects.

Real-time Image Processing

Real-time image processing is one of the most impressive features of modern computational cameras, working tirelessly behind the scenes to enhance your photos as you shoot. When you press the shutter button, your camera doesn’t just capture a single moment – it’s constantly analyzing and adjusting multiple aspects of your image in milliseconds.

Think of it as having a professional photo editor working at lightning speed inside your camera. As you frame your shot, the camera’s processor is already making intelligent decisions about exposure, white balance, and focus. It can detect faces, analyze the scene’s lighting conditions, and even predict motion, all before you’ve fully pressed the shutter button.

For instance, when you’re shooting in challenging lighting conditions, the real-time processor can automatically bracket multiple exposures, combining them instantly to create a perfectly balanced image. In portrait mode, it’s simultaneously building a depth map, separating the subject from the background, and applying selective focus effects – all while maintaining natural-looking results.

HDR processing, noise reduction, and lens correction are also happening in real-time, meaning what you see in your viewfinder is much closer to the final image than ever before. This instant feedback helps photographers make better compositional decisions and reduces the need for extensive post-processing.

These real-time adjustments are particularly valuable when shooting moving subjects or in rapidly changing lighting conditions, where traditional cameras might struggle to keep up.

The Future of Smartphone Photography

AI-Powered Innovation

The future of computational photography is being revolutionized by artificial intelligence, pushing the boundaries of what’s possible with smartphone cameras. AI algorithms are becoming increasingly sophisticated at understanding scenes, recognizing objects, and making split-second decisions about optimal camera settings.

Machine learning models are now capable of identifying multiple subjects in a frame, precisely separating foreground from background, and even predicting moving objects’ trajectories for better action shots. These advances are making features like portrait mode and night photography more reliable and natural-looking than ever before.

We’re seeing the emergence of intelligent scene optimization that can automatically adjust dozens of parameters based on millions of analyzed photos. Some cutting-edge smartphones can now capture and combine multiple exposures in real-time, creating HDR images that rival professional cameras.

Looking ahead, we can expect AI to enable even more impressive capabilities, such as removing unwanted objects from photos instantly, creating professional-quality lighting effects from simple snapshots, and potentially even generating entirely new perspectives from single images. These developments are transforming smartphones into increasingly powerful creative tools that adapt to and learn from each photographer’s unique style and preferences.

Bridging the DSLR Gap

The gap between smartphones and DSLRs continues to narrow as computational photography advances. While traditional cameras still hold advantages in sensor size and optical capabilities, smartphones are increasingly replacing traditional cameras for many photographers, thanks to intelligent processing.

Modern smartphones can now simulate shallow depth of field, capture stunning night shots, and even produce RAW images with computational enhancements. Features like HDR stacking, multi-frame noise reduction, and AI-powered scene optimization help overcome the physical limitations of small sensors. The ability to instantly process multiple exposures and combine them into a single, superior image gives smartphones an edge in challenging lighting conditions.

Perhaps most impressively, computational cameras can now recognize specific subjects – like faces, pets, or landscapes – and automatically apply optimal processing for each scenario. While they may not match the pure optical quality of a professional DSLR, smartphones have become remarkably capable tools that fit in your pocket, making high-quality photography more accessible than ever before.

Computational photography has revolutionized how we capture and create images, transforming the landscape of both consumer and professional photography. What began as complex algorithms in research labs has become an integral part of our daily photo-taking experience, particularly in smartphone cameras. This technology has democratized advanced photography techniques, allowing anyone with a modern phone to capture stunning HDR images, professional-looking portraits, and even astrophotography shots that were once possible only with specialized equipment.

Looking ahead, computational photography continues to push the boundaries of what’s possible. As artificial intelligence and machine learning capabilities advance, we can expect even more sophisticated features, better low-light performance, and enhanced creative controls. The line between traditional cameras and computational cameras will likely continue to blur, with both technologies borrowing strengths from each other.

The future promises exciting developments in areas like light field photography, real-time image processing, and advanced scene understanding. For photographers and enthusiasts, this means more creative possibilities and better tools to express their vision, while maintaining the authenticity and emotion that make photography such a powerful medium.